Hello fellow gamers! Did you know that you can also run an AI Language Model like ChatGPT on your gaming PC? In fact, you can fully install your own large language model in under 10 minutes! This video tutorial will walk you through the entire setup. Let’s start by searching for “oobabooga LLM” on Google and it will take you to this site. Please scroll down a bit and click “Download.”

Now go to your download folder, copy the oobabooga file to a better location such as the “Documents” folder. Then extract the files by right-clicking on the extracted folder, selecting “Extract,” and following the prompts. Next, open a terminal in the extracted directory by right-clicking again and selecting “Open Terminal Here.” Finally, type “ls” to list the files. https://github.com/oobabooga/text-generation-webui

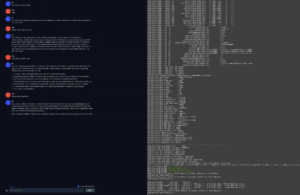

Now let’s begin the setup process by executing the command “sudo ./start_linux.sh” After that completes loading, you will be presented with several options. Since your gaming PC has a high-end graphics card, choose option B. This step might take a few minutes; however, we can skip ahead to downloading and setting up the AI model:

You’ll need to download these files for this purpose: https://huggingface.co/TheBloke/Yi-34B-Chat-GGUF

Copy the name to the clip board and past it on the “Download model or LoRA”

Next, click on the “Get File List” button in the terminal and wait for it to retrieve the list of files present in that directory. Now paste the file named yi-34b-chat.Q3_K_L.gguf

into the text box labeled GGUF and click “Download.”

This process might take some time, but once it’s finished, you can proceed to load the model by clicking on “Refresh” in the terminal window and selecting your newly downloaded AI model from the drop-down menu that appears.

Before loading the model, ensure that you increase the value of n-gpu-layers to 65 as only the AMD 7900xtx supports larger models like this one. If your GPU is an AMD Radeon RX 6800 XT, you should use a smaller model such as https://huggingface.co/KoboldAI/LLaMA2-13B-Psyfighter2-GGUF

LLaMA2-13B-Psyfighter2.Q4_K_M.gguf instead. Once everything’s set up correctly, click “Load” and wait for the model to load into memory of the GPU on your gaming PC.

Finally, you can ask the AI a question by typing it into the terminal after pressing enter: “what places should I visit in Toronto?” The model will provide you with an answer based on its knowledge and learning. Enjoy exploring Toronto through the eyes of your newly installed large language model!

Recent Comments